Sendgrid pricing plans explained

10pm, 5th May 2013 - Web, DeveloperRunning your own mail delivery servers is difficult. It's easy to get it wrong and end up on every blacklist available. Even if you aren't on blacklists, there are still plenty of ways to end up sending your emails to the bit bucket instead of your customers. Sendgrid's promise is to run your mail servers for you and do it right. But which pricing plan should you choose?

Sendgrid have pricing plans available to suit everyone from fresh startups with more co-founders than customers to multinationals with more customers than Australia has sheep.

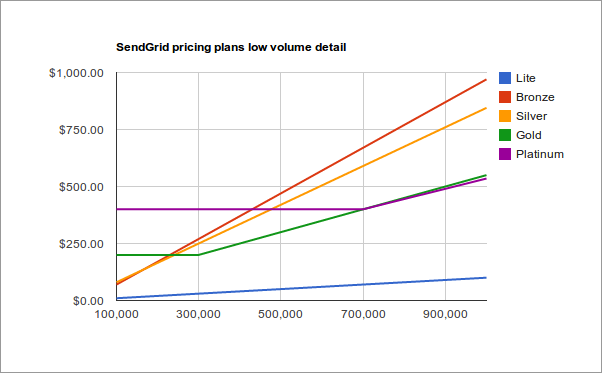

While these pricing plans seem quite simple, getting the best value for money can be a bit tricky. When exactly is it worth upgrading from Silver to Gold? The Silver plan comes with 100,000 credits but if you thought the right time to upgrade was as soon as you are sending more than 100,000 emails per month you would be wrong. The Silver plan is still cheaper than the Gold plan when you are sending 200,000 emails per month.

When should you upgrade from Gold to Platinum? Not at 300,000 and probably not even 700,000. There is so little difference between these two plans above 700,000 emails per month that even when you are sending a million emails per month you are only paying $15 extra on the Gold plan but if you have a quiet month and dip below 600,000 you are $50 worse off on the Platinum plan. It's safer on the Gold plan if your email volume is at all variable.

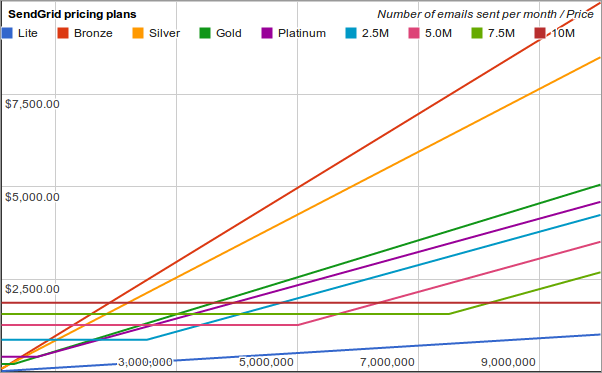

To help SocialGO figure this out, I plotted all the pricing plans on a pair of charts which I have reproduced below:

To use the charts, simply find the number of emails you expect to send each month on the X-axis and move upwards until you find the lowest price line. That's the cheapest plan for your chosen number of emails.

For some companies it's difficult to predict how many emails you will send each month. If you know that your sending volume could swing up or down by 30% each month, find the upper and lower volumes of emails you could send and choose the line that has the lowest average in between those two points.

It gets a bit small and difficult to read down at the lower end of the price plans so here's a detailed version for those sending fewer than a million emails per month:

Both of these charts start at 100,000 emails per month. Below that the plans differ in both features and price so the chart would not be giving you the full picture. The Lite plan shown as a blue line at the bottom also differs quite significantly in its features. There's a reason it is so much cheaper. It compares quite closely to Amazon's SES offering. Amazon only have one plan but they charge you separately for bandwidth and they don't offer all the same features as Sendgrid.

Sendgrid also have higher volume plans. On their pricing plans page it simply says to contact them if you plan on sending more than 1.7 million emails per month. When I did contact their sales department, I got a response with the plans labeled 2.5M, 5.0M, 7.5M and 10M on the first chart.

No one wants to pay too much for their email service. With these charts, you can optimise your Sendgrid plan so that you don't waste any money.

The Middle Name Guesser

5am, 27th January 2009 - Geek, Interesting, DeveloperI have recently made some improvements to the Middle Name Guesser (one of which was to make it actually work again) and I'd like to take this opportunity to invite you to have it guess your middle name... or your friend's middle name, or your favourite celebrity's middle name.

I have also added a couple of statistics graphs and you can clearly see exactly when I fixed that pesky little bug that only showed up when it actually guessed your middle name correctly. (It was a typo I introduced the last time I edited the file - a strong argument for automated testing if ever I heard one.) At that point it was getting about 1 in 20 guesses correct. Since then it has been steadily improving up to a peak of getting 1 in 4 guesses correct. 1 in 4 guesses correct is better than I ever hoped it would achieve. I was originally thinking that 1 in 10 would be a good result. Now I'm wondering if it will get to 1 in 2...

I expect to see the ratio of correct to incorrect guesses remain relatively unstable until the number of new, unique middle names, first names and last names (the red, blue and purple line) starts flattening out. After that the ratio should only improve as the relationships between the known first and last names and middle names are strengthened.

The air powered car

9am, 15th January 2008 - Geek, Interesting, Hardware, Science There's an air powered car that has been causing some hype recently (which, I suppose, is considered "fuel" for this new car. Heh.) and, while it's not all that new, some people are cautiously (and not so cautiously) predicting that "2008 is the year of the air powered car". As a born skeptic, I felt the urge to play devil's advocate.

There's an air powered car that has been causing some hype recently (which, I suppose, is considered "fuel" for this new car. Heh.) and, while it's not all that new, some people are cautiously (and not so cautiously) predicting that "2008 is the year of the air powered car". As a born skeptic, I felt the urge to play devil's advocate.

My first thought was that the compressed air has to come from somewhere and that the process of compressing the air would require energy from more traditional sources. This technology isn't a new way of generating or extracting energy. Much like the talk of Hydrogen-powered cars, this is a new method of storing energy in cars that has been generated somewhere else. Most of these sorts of schemes don't help reduce pollution, they just offset it somewhere else. While this is good for people who live in cities, it's not any better for the planet as a whole.

But there may be more to this plan than just offsetting the pollution. A compressed-air powered car has a few advantages over a Hydrogen powered car: Hydrogen has to be converted from it's pure state into a form with a lower energy content or higher entropy. This is usually achieved by combining it with Oxygen, which is readily found in the atmosphere. The process of combustion usually takes place inside a modified conventional engine or in a Hydrogen based fuel cell, however, both of these methods generate lots of wasted energy. The power extracted from the Hydrogen comes from the expansion of the gases as they combine. The sound and heat energy that is produced at the same time is dissipated into the environment and is wasted.

But there may be more to this plan than just offsetting the pollution. A compressed-air powered car has a few advantages over a Hydrogen powered car: Hydrogen has to be converted from it's pure state into a form with a lower energy content or higher entropy. This is usually achieved by combining it with Oxygen, which is readily found in the atmosphere. The process of combustion usually takes place inside a modified conventional engine or in a Hydrogen based fuel cell, however, both of these methods generate lots of wasted energy. The power extracted from the Hydrogen comes from the expansion of the gases as they combine. The sound and heat energy that is produced at the same time is dissipated into the environment and is wasted.

A compressed-air powered car, on the other hand, can extract the same gaseous expansion based energy as combustion based cars without the loss of the heat and sound-based energy. There has been some discussion (although the results I found were inconclusive) about whether the process of compressing the air was inefficient enough to offset the gains made with the more efficient power stations and in-car decompression process. The end result of reducing waste energy is that not only would the car cause less noise pollution, but the energy used to actually drive the car could be a greater percentage of the total energy available. Less waste is a good thing.

There are, however, a few elements of the article that caused me some concern. The talk of the compressed air driving the pistons which in turn compress the air makes little sense. This is akin to using an electric motor to drive a generator which powers the electric motor. If it worked, it would violate the law of conservation of energy. I suspect (hope) that an over-enthusiastic reporter snuck this into the article rather than quoting directly from a scientist.

There are, however, a few elements of the article that caused me some concern. The talk of the compressed air driving the pistons which in turn compress the air makes little sense. This is akin to using an electric motor to drive a generator which powers the electric motor. If it worked, it would violate the law of conservation of energy. I suspect (hope) that an over-enthusiastic reporter snuck this into the article rather than quoting directly from a scientist.

The article also makes no mention of the range of the car apart from stating that there is a long-range version that would be fitted with a conventional engine. This suggests to me that this new car would suffer from the same drawbacks that electric cars suffer from: a range so small that the car is limited to the inner-city commute from home to work. After a quick Google and a visit to WikiPedia, it appears that other sites claim the range would be somewhere between 100Km - 200Km. That's great for those who only need that but I won't be swapping the long-range fuel tank in my Pajero for one of these until it comes closer to the same range. Earlier articles regarding the same technology suggest even lower ranges so with the technology getting better and better, hopefully the air car will achieve that goal eventually.

Filling me with confidence again, the rest of the article shows that Negre (The motivation behind the idea) truly understands the problem of wasted energy. Firstly, the direct quote: "The lighter the vehicle, the less it consumes and the less its pollutes and the cheaper it is; it's simple," is very similar to one of the major principles behind low-energy building design. So often, when you design something inefficiently, you find that you need to waste more energy to fix problems with the design. Cars have added weight to deal with the wasted sound and heat energy which, in turn, requires more energy to carry around. Fridges emit all their heat at the back, which often gets trapped and heats the inside of the fridge back up. Fridges have to use extra energy just to remain below room temperature because the air around the fridge is above room temperature. The less wasted energy a car has, the less weight it needs to carry around to deal with the side-effects of the wasted energy. The less weight it has to carry around, the more you can do with the energy you have. In fact, the expansion of a compressed gas will actually draw in heat - the same way a fridge works - meaning the air can then be used for cooling the interior of the car. An air-conditioner and a radiator are two fewer pieces of machinery this car has to carry around thanks to it's more efficient design.

Filling me with confidence again, the rest of the article shows that Negre (The motivation behind the idea) truly understands the problem of wasted energy. Firstly, the direct quote: "The lighter the vehicle, the less it consumes and the less its pollutes and the cheaper it is; it's simple," is very similar to one of the major principles behind low-energy building design. So often, when you design something inefficiently, you find that you need to waste more energy to fix problems with the design. Cars have added weight to deal with the wasted sound and heat energy which, in turn, requires more energy to carry around. Fridges emit all their heat at the back, which often gets trapped and heats the inside of the fridge back up. Fridges have to use extra energy just to remain below room temperature because the air around the fridge is above room temperature. The less wasted energy a car has, the less weight it needs to carry around to deal with the side-effects of the wasted energy. The less weight it has to carry around, the more you can do with the energy you have. In fact, the expansion of a compressed gas will actually draw in heat - the same way a fridge works - meaning the air can then be used for cooling the interior of the car. An air-conditioner and a radiator are two fewer pieces of machinery this car has to carry around thanks to it's more efficient design.

Negre also has plans to use small factories in the same regions where the car is to be sold. This will probably cost slightly more - large scale factories have the advantage of being cheap on a per-car basis - but it will cost the environment less. He stated that the parts would not be shipped to the factory to be assembled but would rather be sourced locally - saving again on the environmental costs of shipping.

It's possible, with the advances in technology we have made, that the whole process may just even turn out cheaper in dollars than shipping the cars half-way around the world. Wasted energy and wasted effort are wasted dollars. If Negre understands this, and I think he does, then this venture should turn a profit for both his bank balance and the environment.

MoneySavingExpert under DDoS attack

11pm, 30th October 2007 - Geek, News, Web, Security, Sysadmin, Hardware Last weekend, MoneySavingExpert (my old employer) was the subject of what appears to be a fairly hefty DDoS attack. It has been reported on several blogs and shortly afterwards on Digg.

Last weekend, MoneySavingExpert (my old employer) was the subject of what appears to be a fairly hefty DDoS attack. It has been reported on several blogs and shortly afterwards on Digg.

There has been much speculation about why it's happening just now and who could be behind it but, as always, without any data to analyse there's no way of making any guess more accurate than a wild stab in the dark. There has also been much wailing and gnashing of teeth about the powerlessness one feels when being attacked by half the internet. Not that the tech team over at Money Saving Towers were wailing or gnashing their teeth, they just got in and fixed the problem. By Sunday afternoon there was a static holding page up which I could actually request and receive in a browser and by Monday morning the site appeared to be back up and running as usual although I think the forums were still down at that time.

There are some things that can be done when you are the victim of a DoS attack. If MoneySavingExpert can survive it, then so can you.

How you deal with a DoS depends greatly on how it's happening. If you don't already know why your site is down, start trying to find the reason. Log files and aggregated statistics are always the first two places I look.

At my current place of employment, we have a series of graphs generated using Orca and RRDTool for each of our servers. These graphs show us everything from CPU load to disk space used to the number of open TCP connections to the machine's uptime. If a particular server is causing the problem then I can load all of its graphs in a single window and scroll down the list looking for anything unusual. If the problem is with a particular website then I can load up just the servers that website affects. If I don't know which part of our system is the cause of the downtime, then I can load them all up.

Unusual patterns in log files can also be an indicator that something is wrong. If I notice that one IP address has requested more web pages than the next ten combined then I start to suspect that something is wrong at that IP address. If I notice that today's log file is twenty times the size of yesterday's log file, then I'm going to want to have a look inside both of them. At this stage, all I'm doing is gathering information because I don't even know if it's a deliberate DoS or just some other sort of site outage. Either way, the logfiles often hold the answer.

There are many different ways a DoS can be caused. Simply flooding a webserver with ten times the normal number of requests it has to deal with is a crude but effective method. This method will often cause your upstream bandwidth provider to start dropping packets because it can't keep up the pace. Even if your webserver could serve all the requests, some of them won't make it all the way there. Other types of DoS exist, however, and it's worth mentioning some of them here.

There are plenty of vulnerabilities in the off-by-one-buffer-overflow category that will cause a program to crash. These are inevitably classed as denial of service vulnerabilities because that's usually all that can be exploited with them. The important thing to note is that you don't need a large botnet or even a small one to cause a DoS to someone using this method. All an attacker needs is a single computer with the ability to anonymise it's payload through something like ToR or a list of proxy servers. Every crash (i.e every request) is going to cause several minutes of downtime.

Another class of DoS attack is caused by requesting a page that causes a lot of resource usage, such as requesting '%' from a badly written search function. If the page is vulnerable, this example will cause the result set of the search to include every row in the database. This will chew up large amounts of CPU and RAM even if it only actually displays the top ten results.

A DoS attacker could also request pages that cause lots of logging to occur, hence filling up the victim's file system. I have actually caused this to happen completely by accident on one guy's website. Apparently, in the space of about half an hour I caused 60GB of log files to be generated on their webserver. Luckily, they knew what I was doing and had my phone number so they could ask me to stop.

These sorts of attacks - the ones that cause resource starvation on your webserver - can be caught with an IDS such as Snort, any decent firewall or a dedicated appliance. Once you can identify the packets that are part of the DoS it is simply a matter of knowing how your firewall/IDS is configured and configuring it to drop those packets.

The other sort of DoS attack - the sort that attacks the services that support your site rather than the site itself - cannot be stopped by you. They will require the people who run the service that failed to do whatever they need to do to survive the attack. In the case of MoneySavingExpert, it appears that they have requested the services of ProLexic, a company that specialises in mitigating the effects of bandwidth-based DDoS attacks. Essentially, ProLexic point all of the victim's traffic at their own servers, filter out the bad requests and pass the remaining requests on to the real webservers. It's a simple but effective tactic that works against the crude but effective attack.

Little Bobby Tables

5pm, 14th October 2007 - Geek, Humour, Web, Security, Developer Ahhh xkcd, you've done it again.

Ahhh xkcd, you've done it again.

There's not enough security humour in this world.

I want to name my cat Tiddles"><script>alert('Foo!');</script> now, just so that I can put that in as the answer to my secret question on Facebook.

I just remembered that xkcd always put a title tag on every image that contains another little joke. I've replicated the title-tag joke for this comic here as well. If you're using Firefox, you can hover over the image to read it.

So many servers, all hacked.

11am, 13th October 2007 - Geek, Interesting, Web, Security, DeveloperYesterday, while trying to track down a problem with one of our forums, I was looking through the validation log and spotted something rather unusual.

The validation log stores all the parameters passed to the forums that failed validation so that we can verify that no legitimate users are being denied access. Parameters include things like which post you are looking at, which thread it's in, which board the thread's in and which page of the thread you are on. Normally, the post number, thread number and page number are all actually numbers but occasionally, somebody thinks it might be a good idea to put something else, like a URL, into the post number parameter.

The result was astounding.

I sat there for minutes, watching the URLs of compromised servers fly past on my screen. In this case, it was a misguided hacking attempt aimed at a completely different piece of software than the one we are running. We didn't have the vulnerability he was trying to exploit. Had it been aimed at the correct software and succeeded, it would have would have changed the parameter so that instead of including a PHP file from the webserver, it would have included a file from someone else's webserver and run that file just as it does when the file is local. The difference is that the code from the other webserver would have installed a rootkit, a command and control interface, a couple of new users and finally sent a message back to it's owner telling him where we were.

Unfortunately, people who try to seize control of other people's webservers are a paranoid lot. They don't usually just start hacking from their home computer and head straight for the target. They will use Tor or an anonymous proxy to mask their true identities. They'll use webservers that they have already cracked to help crack new webservers. In this case, tracing the hacking attempt back to where it came from only lead us to another compromised server with a web-based command and control page and the file required to hack other servers.

I didn't pursue it any further for several reasons: I'm not paid to hunt down crackers, it would have been illegal for me to use the compromised server to find out where it had been compromised from and it was an unsuccessful attempt to exploit a vulnerability we didn't even have. Out of interest, I did quickly grep through the entire set of validation logs just to see how many of these attempts there were and from how many already-compromised webservers. The result was astounding. I sat there for minutes, watching the URLs of compromised servers fly past on my screen.

I wasn't all that surprised to see lots of hacking attempts. Just put a machine on the internet running Snort for a day and you'll understand why. What did surprise me was the sheer number of already compromised servers sitting out there. Do people not have intrusion detection systems ? Do they not check their log files ? Has somebody like me not already noticed that their server has been hacked and emailed to let them know ? (For the record, I did email the admin of the first server but once I found the hundreds or thousands in the log files I decided that it was a bit much effort for me...)

Does security not matter to these people ?

I suspect that's the answer. Most people are on the net to create something. They aren't interested in learning all about computer security and how to secure their machines. They just want to create their own little corner of the web where they can do as they please.

Security implications of data recovery

4pm, 23rd September 2007 - Geek, Interesting, Security, Developer, Sysadmin, Legal After last week's data recovery antics, I started looking at what is actually stored in Firefox's crash recovery file (sessionstore.js) and it appears to be ripe and juicy for a bit of password sniffing. A quick search though the file and I found one of my passwords hiding in plain sight along with the associated username. Although the file has restrictive permissions (600) anyone with admin/root privileges would be able to read it. Anyone who can login with your privileges would be able to read it. Anyone who has access to your computer, even for only a couple of minutes would be able to read that file.

After last week's data recovery antics, I started looking at what is actually stored in Firefox's crash recovery file (sessionstore.js) and it appears to be ripe and juicy for a bit of password sniffing. A quick search though the file and I found one of my passwords hiding in plain sight along with the associated username. Although the file has restrictive permissions (600) anyone with admin/root privileges would be able to read it. Anyone who can login with your privileges would be able to read it. Anyone who has access to your computer, even for only a couple of minutes would be able to read that file.

Sure, "root can already do anything" you say, but this allows whoever is root to gain extra privileges. Privileges on another system where he isn't already root. This is your gmail password, your MySpace password, your banking password. Maybe, this is the same password you use for all of your accounts on all your social networking websites.

It doesn't seem to matter whether the password is in a "password" field or just a plain text field and it doesn't matter whether the page is encrypted or not. Your password will be stored, with the username it accompanies, in plain text in your home directory.

This isn't just limited to passwords either. What if you logged in under an anonymous name at some forums somewhere so you could blow the whistle on your corrupt boss without fear of sacking ? What if you were emailing the blueprints to you next invention to the patent office ? What if you were uploading photographs you had taken in secret from your hotel across the road from the US embassy to a Russian spy website ? What if something even more unlikely and implausible were to happen that would be devestating to you if it were discovered you were the culprit ?

The lesson to learn is that if your data can be recovered by you after a crash, it can be recovered by pretty much anyone at any time. If you're a developer, remember this and think about not storing passwords or at least storing them encrypted.

How to recover your data after a crash

9pm, 17th September 2007 - Geek, Interesting, Apple, Linux, Sysadmin, HardwareYears ago, back in the days of 33MHz processors and Mac OS 7, my little brother was writing a letter to our Granny when the computer he was using crashed. Crashes were good in those days; you got a little box on your screen with a picture of a bomb in it, a cryptic crash message and a restart button. As I was the resident computer geek, I was immediately called for and asked if anything could be done.

Luckily, at the time I had a voracious appetite for anything that looked like it could teach me how to program and I had read everything remotely technical I could find on the internet. I had, at the time, recently read about how to use Mac OS's built in debugger to save the contents of RAM to a file on the hard disk and I guessed that this could be used to recover my brother's letter. It took me a couple of goes to remember how to do it as I couldn't have the tutorial open while I was in the debugger but I did eventually remember. Shortly afterwards we had a 4MB file sitting on the hard drive that hopefully contained my brother's letter. A quick search through the file and we had recovered nearly all of the letter and put it back in SimpleText where it belonged.

Fast forward to today and things have changed a bit. Operating systems don't have built in debuggers that you can invoke with a keystroke (Well, some do, but not usually by default.) and 4MB of RAM is not considered enough to stir your coffee with, let alone boot a kernel into.

None the less, there are still things that can be done if you don't panic and are willing to think about your problem a bit. In my case, I was busy writing up a new blog post. Quite a rant if I remember correctly. I had poured my anger into the keyboard and was just going through it once more to check for spelling errors before posting it when Firefox disappeared. Gone. No warning, no crash dialog, no error message. Just gone.

Immediately I started Firefox back up again hoping I could recover my rant. I didn't want to have to type it all out again. I was hoping that when it restored my session with all it's tabs it would also restore the contents of the blog post field. Alas, it was not to be.

Since that idea had failed to produce any results, I tried the same trick that worked for my brother all those years ago: Save the contents of RAM to a file on the hard disk and look through it for what I had just been writing. Not being sure of how to do this, I fell back on something I did know how to do: copy the contents of virtual memory. I checked /etc/fstab to find out where my swap partition was and then typed dd if=/dev/hdd5 of=/home/dave/swap_partition on the command line.

This saved the contents of swap to a file. Next, I ran the command strings swap_partition > swap_strings.txt which grabbed anything that looked like an ASCII string out of that file. Basically, any text in virtual memory would now be in the file swap_strings.txt. With trepidation, I grepped through the file for a word I know I had typed several times in the blog post. Nothing. I tried another word, and another. Although I was finding plenty of occurrences of the words, none of them were part of the blog post I had just written.

Since another idea had failed to recover my work, I needed to think again. Where else could this data have been saved ? Logically, the next most likely place was the .mozilla directory in my home directory. This is where Firefox saves all of your user-specific profile settings. Under Windows this would be in C:\Documents and Settings\Username\Application Data and on a Mac it would be in /Users/username/Library/Application Support.

Firefox saves all the tabs and all the windows you currently have open on a frequent basis so that if it crashes or shuts down untidily for any reason, at any time, it can start up again exactly where it was and recover any work you were doing. In my case, Firefox had opened all my tabs and remembered what was in the text fields such as the headline and date fields and I had been hoping that it would remember the textarea which contained the majority of the post. I was to be initially disappointed.

Although Firefox hadn't filled the large textarea in when I had returned to the page, I had a feeling that it may have been saving it's content somewhere on disk even though it hadn't been automatically recovered. Sure enough, I ran the strings command over every file I found in the .mozilla directory and one of them - sessionstore.bak - had my blog post in it. The data appeared to have been URL encoded and was mixed up with every other bit of data about the session that had just crashed but neither of these problems were difficult to work around. A few quick search-and-replace commands later and I had recovered all of my writing.

Maybe this will work for you, maybe it won't. The important thing to remember is that even though your data may look to be gone, there's still probably another copy of it floating around somewhere and if you know a couple of good tricks, you might just be able to recover it.

2007-10-16 - Update: I did a bit more research and found out how to dump the contents of RAM and the contents of any single process.

[dave@dave-desktop:~] # sudo cat /dev/mem | strings > ~/mem

The first command will save any ASCII string in RAM to the file mem in your home directory. To save the entire contents of RAM, just remove the

[dave@dave-desktop:~] # sudo gcore -o ~/coredump pid| strings part of the command. This will save all the RAM, even if there isn't a running process using some part of it.[1]

The second command will save the memory of the process pid where pid is actually the process id of the process whose memory you want to dump.

I also found a great page on someone else's experiences with MacsBug almost exactly mirroring mine.

[1]: I tested this by starting vi and typing in "thisisanabsolutelyuniqueteststring", killing the vi process without saving the file and running the command above immediately with a small modification. Instead of piping the output to a file, I piped it to grep thisisanabsolutelyuniquetest. The grep command found itself, as it always does, but it also found the original string, identified by the rest of the unique string that I didn't include in the grep command.

You have to be careful when search through running memory. I now remember having this problem with the Mac all those years ago. Whenever I searched for parts of my brother's letter, I would just end up finding the part of memory that contained the search string.

Burning water not so hot after all

8am, 16th September 2007 - Geek, Rant, NewsSome random Cancer researcher discovers a way to make salty water "burn" by firing radio waves at it. He shows his mates from the Chemistry department and they all get quoted by a reporter as saying that "we want to know whether the energy released will be enough to power a car". The article is copied around everywhere (I have no idea which one was the original.) The world goes crazy.

Think about it for a minute. This is just another perpetual motion machine disguised as a chance discovery by scientists in an unrelated field. People think they've found a way to violate the laws of thermodynamics all the time. Some of them labour under the delusion for quite some time, others realise their mistake but see the potential for a scam and others quietly go back to their research and hope no one noticed their mistake.

If you thought you had just discovered a new, totally clean, excessively abundant energy source, why would you invite a chemist to see it ? Why weren't any physicists invited to see this amazing burning water ? Where are the venture capitalists ? Where is the patent office ?

If any of those people were to become involved in this, they would ask the obvious question: where does the energy come from ? Water has very little energy stored in a way that can be released. It has quite a lot of entropy. Firing radio waves at water causes the hydrogen-oxygen bonds to weaken but requires energy. If you were to measure it, my money would be on the amount of energy being put in to the system in the form of radio waves being slightly greater than the amount of energy extracted from the system in the form of heat. There would also be some unmeasured heat loss and other energy loss in the form of sound and light.

There are two further possibilities. One is that these guys have discovered a new, lower energy, higher entropy form of water that up until now had never been discovered. Maybe there's an extra neutron in there now and they've discovered a cheap way of making Deuterium (heavy water). Maybe there's something weird going on with positrons. Maybe they've successfully achieved cold fusion. Although at 3000 degrees it wouldn't be considered cold any more.

Maybe there's a reason why physics should be left to the physicists. The answers are not in yet but my money is most definitely on this being recorded as a fascinating curiosity, but not a new fuel source.

P.S. To the guy who said that water is the most abundant resource on earth, if I remember my High School Physics correctly, the most abundant compound on Earth by weight is Silicon Tetra-Oxide. This means that although around 29% of the Earth is Oxygen, a fairly large proportion of that is not water. In fact, I just looked it up and apparently around 0.02% of the Earth by weight is water.[1]

Swedish security researcher exposes plaintext passwords found while sniffing Tor

10pm, 12th September 2007 - Geek, Rant, News, Web, Security, Legal As reported on Ars Technica, The Register, Heise Security and Slashdot, the Swedish security researcher Dan Egerstad of DEranged Security has thrust into the limelight a security issue that has been plaguing concerned security technicians for years. Unfortunately, many of the news stories either miss the point entirely or misrepresent Tor as being something it is not and the security vulnerability as being something it, too, is not.

As reported on Ars Technica, The Register, Heise Security and Slashdot, the Swedish security researcher Dan Egerstad of DEranged Security has thrust into the limelight a security issue that has been plaguing concerned security technicians for years. Unfortunately, many of the news stories either miss the point entirely or misrepresent Tor as being something it is not and the security vulnerability as being something it, too, is not.

Tor (The Onion Router) aids anonymity. Anonymity is closely related to privacy. Privacy and security often go hand in hand. Therefore, Tor is a secure network.

Wrong ! Three of the above statements are correct but the conclusion drawn from those is not. Tor is not a magic silver bullet for security and privacy. You can't just hook up to the Tor network and expect that everything you do is now secure.

Now that I have that off my chest, let's look at the security research. Research that, completely coincidentally, a friend of mine and I had been discussing last week in our own attempt to do a very similar thing: Find an appropriate point on a network, set up a packet sniffer and publish every username/password combination we find in an effort to push the encrypted logins only agenda. We're in favour of SSH over Telnet or rlogin, scp over rcp, SFTP over FTP and HTTPS over HTTP.

It's always interesting looking at what people actually choose as passwords. Some of them look to be a good mix of uppercase letters, lowercase letters and numbers, some are just lowercase and numbers, some are just lowercase and some are just numbers. I saw one that was 13 random characters long and another that was literally '1234'. I also saw 'temp' and 'Password' as passwords. I did see a few passwords that had symbols but none with any special characters. (Considering that most of these embassies speak languages other than English, this seems strange...) Even without the aid of packet sniffers, some of these passwords seem trivially easy to brute force.

Some people didn't quite understand what had happened. I'm not mentioning any names but don't fret; Dan did. Dan's site was taken down as requested. There's a well known saying about horses and stable doors that seems to apply here. Worse still, Dan's site had (and still has) instructions on what the actual vulnerability is and why it's a problem. Something that most of the news stories about his research failed to pick up on.

Now, on to the debacle of Chinese whispers around any news site catering to pseudo security that ensued. Each one quoting the last one until the message was completely lost. I suspect that The Register were deliberately sensationalising their headline: "Tor at heart of embassy passwords leak" just to get a few extra readers. Many of the news stories focussed on the fact that it was a Tor exit node that the sniffer was running on when in fact this was merely incidental to the real story. Let me state this very clearly: This could have been ANY machine on the route between the client and the server. Tor made it relatively easy for Dan to get on that route but it's certainly possible to achieve without Tor. The vulnerability is that the usernames and passwords are sent in plain text across an untrusted network (and what network of any moderate size can be trusted ?)

There have been some moderate and intelligent responses to all of this. If you filter your Slashdot discussion just the right way, some serious insight (rather than incite) can be gained into issues associated with the one raised. One user points out that Tor should not be used for tasks that can identify you. Another responds that sometimes you want to hide not who you are but where you are. Yet another user suggests that employees would be fired from government positions for using Tor.

One thing missing, however, is a sense of concern about the implications of this. Everybody seems to be treating it as a warning: Look what could happen if you don't encrypt your network traffic. Bad people could get hold of your passwords ! But what if the people logging in to these email accounts are not the employees we think they are ? Why would an employee need to log in to their own, personal email via Tor ? Why would a terrorist need to log in to an embassy employee's email account using Tor ? The second question appears to be somewhat easier to answer and somewhat harder to digest.

My thought is that Dan Egerstad has missed the real significance of the Tor network, possibly because he was already focussed on Tor in his research and hence didn't see it as an unusual element. The real significance is that these accounts may have been compromised some time ago and the original attackers are regularly reading all of these email accounts, simply using Tor as a method to remain anonymous. They probably have comparable hacking skills as the security researcher who exposed the problem and have enough concern about their own anonymity to take steps to ensure they retain it. The best our officials can come up with is a request to remove Dan's website from the internet. Now there's a worrying thought.

The smoking ban

11am, 27th August 2007 - Rant, News, LegalSince the smoking ban came into effect on the first of July, I have inhaled more second-hand cigarette smoke than in the entire previous year. The ban forces people who used smoke indoors to now smoke out on the street... where I am.

There's a daily gauntlet-run past the Royal Free hospital where patients, visitors and staff alike now all smoke on the street out the front of the hospital. My eyes are watering by the time I get halfway past. There's another one just before I reach my work where all the builders from the worksite next to the building I work in congregate along a pathway barely a metre wide and fill their lungs and my atmosphere with cancerous gunk.

Ironically, I used avoid pubs a little because the smell of smoke would permeate through my clothing and hair and get worse over time. Now, pubs are a safe-house where anybody who would pollute my air must now leave and do it outside. Of course, when I want to leave, I still have to walk through the crowd of people standing just outside the door, smoking as fast as they can so they can get back inside to sit with their mates again.

Still, it's a step forward. Not because it reduces the amount of passive smoking I am forced to endure but because it enables the next step: a total ban on cigarette smoking in all public places.

Eating and watering and generally relaxing

7pm, 31st July 2007 - HumourI found this waiting in for me Trillian when I got back from lunch the other day:

[13:55] the magdaddy: hello little one! did you make lovely logins for jane doe and john doe? do they have to come up to receive their user/pw or can i take them down for them?

[13:55] *** Auto-response sent to the magdaddy: I'm busy. No, really. I am.

[13:55] the magdaddy: no, this is the one time in the day when you are not busy - you are eating and watering and generally relaxing.

[13:55] the magdaddy: you cannot fool me.

Yes indeed, you cannot fool the magdaddy.

Apocalypse tomorrow

4pm, 29th July 2007 - Geek, Interesting, Hardware, Travel I've always been interested in making my own alternative energy. Not so much for it's potential in saving the environment (although that's important too.) but more for the independence it gives me.

I've always been interested in making my own alternative energy. Not so much for it's potential in saving the environment (although that's important too.) but more for the independence it gives me.

I am uncomfortable relying on other people and in our modern society I find myself unable to avoid relying on other people. I live in a house built by other people, I buy food that was grown by other people, I am supplied with water, electricity and gas by other people, I drive a car built by other people and I am typing this on a computer built and programmed by other people. That's part of how modern society works; because I am able to pay money to have somebody else do all these things for me I can specialise on a small number of things I can do and all the people can specialise in what they do. In short, as a whole we are more efficient because we all cooperate.

The problem I have is that if it all went away tomorrow, I wouldn't know what to do. I know how to do some of these things - not as well as the specialists - but I could do them well enough to survive. I have grown my own vegetables, found and collected my own drinking water, built myself a shelter (I can't quite go so far as to call it a house but given time I could get that far) and even generated my own electricity but I don't think that I can do any of them well enough to be comfortable. My house would have leaks and drafts, my vegetables would be small, weedy and only grow in certain times of year, if I could build some transport it wouldn't go much faster than walking pace, the electricity I generated would barely be enough to run a couple of lamps, let alone a computer.

The key to being comfortable is in preparing now. If I learn the skills then I can make my own way forwards. If I make my self sufficient transport device now utilising someone else's help then I'll have it tomorrow, whatever happens around me. The apocalypse is not coming but the same preparations you would make for it can help even without an apocalypse. If you don't have to buy petrol for your car, you can save the money you earn in your job for something else. It doesn't matter why you don't have to buy petrol; you could grow biodiesel, generate hydrogen via solar, hydro, wind or some other power at home and power you car on that or simply convert your car to be solar or wind powered itself. Imagine riding to work each morning on one of these or one of these !

Of course, there are some problems with these modes of transport. The land kite isn't going to work in a city or in large numbers. The kites would just get tangled up in everything. The land yacht has a better chance in the cities but car drivers are still going to be frustrated with these devices on slow wind days. Solar cars have a similar problem; they aren't very good at stopping and accelerating the way you are required to in a city. They're also not very good when a skyscraper blocks the sun. Cars that charge up overnight and run off batteries tend to have a very short range.

The solution to all these problems is cooperation. Just as societies progress by having their members work on their strengths and support each other's deficiencies, a car can progress by having more than one power source available to it. If your car has solar panels, pedals, a battery, a sail and possibly a turbine, it can use the sail when it's windy, use the sun when it's sunny, use the turbine when it's traveling directly into the wind, use the pedals when everything else fails and use all of them to charge the battery when it's stopped at traffic lights or parked. The result of this would be a car that's completely self-sufficient, works all the time, produces zero emissions and doesn't cost a penny to run.

In search of an English summer

9pm, 2nd July 2007 - Rant, News, Humour, TravelA year ago I wrote about the English summer. At that time I was skeptical about my workmate's assertion that this was uncommonly good weather for an English summer. Surely the odd day here and there that made it all the way to 30° couldn't be considered good. But this year has put me straight. Nary a day above 25° and it's been raining pretty much solidly for the last three weeks. On Tuesday, it hailed ! Jane had a snowball fight at her work by scooping up the hail, smooshing it all together and throwing it at her boss !

I take it all back ! Last summer was great ! I didn't mean to offend your summery goodness... now can I please have a little sunshine again before I turn completely white ?

iPhone and Security: Spreading the FUD.

12pm, 30th June 2007 - Geek, Rant, News, Interesting, Apple, Security, Hardware Straight from news.com.com: "Gartner says that iPhone could punch a hole through corporate security." Apparently it "doesn't contain the necessary functionality to comply with basic corporate security."

Straight from news.com.com: "Gartner says that iPhone could punch a hole through corporate security." Apparently it "doesn't contain the necessary functionality to comply with basic corporate security."

What the... ?

Strangely enough, the page that text links to doesn't actually point out any lacking functionality in the iPhone. In fact, it completely ignores the text that links to it, mostly because while the interviewer is lacking a clue, the interviewee clearly has one. Neel Mehta, Team leader for Internet Security Systems at IBM says that the iPhone is more complex than any smart phone to date and hence has a greater potential for security flaws. He also points out that the development model for third party developers means that everything non-Apple is going to run within the sandbox environment of Safari. He also points out that the iPhone is going to be regularly connected to computers and the internet and that updates from Apple will be seamlessly integrated. The greatest security threat to the iPhone, according to Mehta, will be it's own popularity. I know it won't silence the critics but hopefully the drone of "Macs are only secure because Windows is a bigger target." will lose a few decibels. Unless, of course, the iPhone is bugged by as many security flaws as Windows and Internet Explorer have been, but my opinion is that it would be almost impossible to catch up to the lead Internet Explorer has.

Further on Gartner's list

Lack of support from major mobile device management suites and mobile-security suitesThose suites are designed to prop up security that has been omitted from existing smart phones. The iPhone, being based on Mac OS X will most likely support many of the same security features and management suites that Mac OS X desktop and laptop computers currently enjoy. There is simply no need for anything beyond what already exists.

Lack of support from major business mobile e-mail solution providersBy this I presume they are referring to lack of support from RIM and their existing infrastructure for Blackberry. Why would Apple want or need that in an iPhone ? The iPhone automatically connects to any open wireless network and handles email over standard (as in IEEE standard) protocols. If secure communication is required, the iPhone supports all the appropriate IEEE standards for that as well. There's no need for a dedicated, private network when you can just encrypt your communications and send them over the public internet.

An operating-system platform that is not licensed to alternative-hardware suppliers, meaning there are limited backup optionsLet's split this into two parts: The OS is not licensed to alternative hardware suppliers... so what ? What's the problem with this ? There isn't another hardware manufacturer that can make iPhones and even if there were, Apple sells hardware; they don't want just anyone else to sell the same hardware just so they can stick Apple's OS on it. It would cheapen the whole experience, and I'm not talking about the money here. The second part makes even less sense; how would licensing the OS to other hardware manufacturers make available further backup options ? There are already backup options apart from syncing your contacts, appointments and emails on to a computer. All these protocols are open and Apple's iSync allows you to write plugins for backing up whatever you like. Managing backups just isn't a technical problem, it's a people problem. Making backups easy is much more likely to succeed than having a plethora of backup options.

Feature deficiencies that would increase support costs (for example, iPhone's battery is not removeable)This is the first real complaint, but it's not a new one. People have been complaining about iPod batteries since they were first released to the public. Not being able to remove the battery has meant that many people had to send their iPods back to Apple to have them repaired and that is probably going to be less acceptable with a smart phone. Many people can barely live without their phones for the 8 hours it takes to get back home and recharge it after forgetting to charge it the night before. Smart phones would be even worse. Apple must be fairly confident that they have solved their battery issues but I predict lots of publicity for even a single failed battery in the first month.

Currently available from only one operator in the U.S.Definitely a real complaint and one that would cause me to postpone buying one if I were in the US. I predict this will change very shortly and people who have bought an iPhone now, before other carriers come on board and the prices drop, will regret being early adopters. Maybe Apple will offer rebates or free upgrades to people who purchased an earlier, inferior product but in the past they have only done this for purchases in the month prior to the announcement/release of the new scheme. Some people will be left out in the cold.

An unproven device from a vendor that has never built an enterprise-class mobile deviceBring on the marketing-speak and weasel-words! What is the MacBook Pro ? Is that not an enterprise-class mobile device ? What is the iPod ? What is an enterprise-class mobile device ? What makes you think that a vendor that hasn't released an enterprise-class mobile device are incapable of releasing a hit on their first time ? The iPhone was labeled "unproven" because it hadn't been released at the time they wrote their article. What Gartner were implying was that Apple are an unproven company which is clearly false. Apple have been around as long as Microsoft and IBM, they have been through good times and bad, as have both Microsoft and IBM, and they have survived. Now they are gaining serious traction amongst home users who love Apple products because they look good, just work and are fun to use. We are already seeing home users transition to business users and expect their computers to continue looking good, just working and being fun to use, even when they are being used for "work".

The high price of the device, which starts at $500$500 is expensive, however I have already stated that I expect this price to drop in the near future. This has always been Apple's strategy; to release a product that seems expensive to start with but is so insanely good that the product you left to try out Apple's product seems dull and unexciting afterwards. In the end you realise that you pay more and get more. It's up to each individual person to decide whether the extra price is worth what you get.

A clear statement by Apple that it is focused on consumer rather than enterpriseApple have never shied away from the enterprise. Believe me that the XServe RAID is not something that you would want in your living room. The iPhone, like the iPod is primarily designed for home consumers, not business consumers and yet Apple know that most people are both. Where I work, several of the people in my department have Apple laptops, most of them have iPods and I suspect that all of them would jump at the opportunity to use an Apple desktop instead of a Windows one every day if they could. (Not that my company doesn't support Macs... it just seems that you only qualify for one if you are an "arty" type and hence a Sysadmin who uses a terminal emulator all day, every day would be much better off with PuTTY than Apple's Terminal. Hmmph !) I would be extremely surprised if my boss didn't want to trade in his Blackberry for an iPhone. The iPhone will integrate into a corporate network as an extension to user's computers and the first people to get one will be the CTO and his team probably because the CEO already bought one and they know they're going to have to support it pretty soon.

Most handheld devices come with easy-to-use tools that enable rapid interfaces to business systems. When end users install such tools, they effectively 'punch a hole' through the enterprise security perimeter--data can be moved across applications to personally owned devices, without the IT organization's knowledge or control.This problem is not limited to iPhones. iPods, any MP3 player, USB thumb drives, laptops, mobile phones, CD burners... even floppy disks. All of them can be used to take sensitive data out of the corporation's control. If your security department don't want third-party applications installed on their computers, lock them down so it isn't possible but this alone will not stop data leaks. The only way to keep sensitive data secret is to limit access to the data. Most of your organisation should not have direct access to sensitive data. Those that do have access should have training about how to identify and handle sensitive data. Banning iPhones from your network is not going to help stop data leaks.

The article is misguided and seems to be more than just a little self-promoting. The more FUD that is spread, the more that corporations believe that they need consultants to understand it all. So it is always in the consultant's best interests to spread FUD or have FUD spread for them. This is FUD, pure and simple and it doesn't wash.

Galumph went the little green frog one day.

8am, 9th June 2007 - Geek, Interesting, Web, Developer, Sysadmin It's funny what you can discover when you analyse your web server logs properly. There are all sort of things happening out there on the net and some of those things happen may to you, even if you aren't aware of them.

It's funny what you can discover when you analyse your web server logs properly. There are all sort of things happening out there on the net and some of those things happen may to you, even if you aren't aware of them.

A couple of days ago, someone visited this very site in search of lyrics to a campfire song that I haven't heard anyone sing in nearly twenty years. How do I know this ? Well, if you do a Google search and your browser passes the referer string they way it should then the site you end up on can tell what you searched for. It's not just Google either. Many search engines support the same feature. This guy searched for we all know frog go ladadadada lyrics which returns precisely one page... mine. I'm not really sure why my page is the only result for that particular search but it does have the word frog on it and I appear to use the word we quite a lot. Just because I'm a helpful sorta guy, I would suggest that searching for galumph went the little green frog one day lyrics is probably going to get you much better results than your original search.

The most common search term that people use to find my site is "Ladadadada" but some others include "instant mee goreng", "co_conspirator", "noodly", "JAILBIRD GIFS", "gauma camping", "pizza pictures", "finish the sudoku", "can't get enough of croatia", "nebakanezer", "hippomoo newcastle", "EU PASSPORT lane". Strangely enough, MSN Live search only ever seems to direct people to my site who were searching for drugs. It's most likely that these are actually bots, trying to use the referer field to insert that search somewhere on my page and then hoping that I will do a search for a drug I haven't heard of and then want to buy it. It seems a little subtle for your average spammer. I have also received just the one referral from Ask.com. This person searched for how to open tamper proof tags and once again, there I was on the first page of the search results.

Then there's the guy who keeps trying to add comments to my blog. He loads one of the blog pages and then somewhere between one and ten seconds later he attempts to post a comment. There's more weirdness involved here however; any pair of requests (blog page then comment adding page) seem to come from the same browser but later, often on the same day, he will request the same pair of pages using a different browser and usually a different OS. So far I have seen 43 different user agent strings from the same IP address exhibiting the same behaviour. 13 different operating systems including Windows NT 5.0, Windows NT 5.1, Windows NT 5.2, Windows CE, Windows 95, Windows 98, WinNT4.0, Windows XP, RISC OS, WebTV OS, Mac OS 9, Mac OS X, Ubuntu and some other version of Linux. Some of the operating systems were in Russian and some in German. I have also seen 20 distinct web browsers including IE 3.02, IE 5.0, IE 5.5, IE 6.0, IE 7.0b, Opera 5.0, Opera 7.54, Opera 8.0, Opera 8.5, more versions of Firefox than you can poke a stick at (all counted as Firefox), Sylera, Galeon, K-Meleon, Phoenix, Spacebug, Minefield (all of which are builds of Firefox), Omniweb, Acorn, AOL 9.0, WebTV. After all this monkeying around with user agent strings, whatever script is actually creating all these requests isn't even behaving like a real browser. Firstly, unlike a real browser, it doesn't request the supporting parts of the page such as the stylesheets and images. Secondly, it doesn't resolve the form action base URL properly and hence all these comments end up going to a 404. In other words, whatever stock pumping / drug promoting blog comment spam he's trying to insert into my page, it's not working and he still hasn't noticed.

Like I always say: it takes all sorts to make this crazy world.

A tale of duelling GRUBs and boots.

8am, 26th May 2007 - Geek, Linux, Sysadmin Last night I finally coerced GRUB into doing what I wanted it to do. It's not that GRUB was particularly stubborn but more that it had a particular place that it liked to look for it's instructions on what to do and wouldn't do anything at all if it didn't find them in that place. Of course, no one tells you what this file is called... you don't really understand GRUB until you have searched half the internet trying to figure it out.

Last night I finally coerced GRUB into doing what I wanted it to do. It's not that GRUB was particularly stubborn but more that it had a particular place that it liked to look for it's instructions on what to do and wouldn't do anything at all if it didn't find them in that place. Of course, no one tells you what this file is called... you don't really understand GRUB until you have searched half the internet trying to figure it out.

Let me start from the start. I'm installing Linux because I have a low spec box (650MHz, 128MB, 16GB) and I think I can get better performance out of a tightly controlled Linux installation than a sprawling, all-encompassing Windows XP installation. Jane, however, has a fear of Linux, possibly caused by some sort of Linux based trauma while she was at University and hence I can't get rid of Windows just yet. It would be nice to install Ubuntu and just be done with it but unfortunately the two different versions of Ubuntu I have tried both require more RAM than I have. They would both boot from the Live CD if you had about half an hour to spare but as soon as you tried to do anything more complicated such as opening a program the system would grind to a halt. Hence I settled on Damn Small Linux, a distro designed for it's small size and low reqirements while still being flexible enough to grow into a full distribution once installed. I could have my cake and eat it too !

After burning the LiveCD (it seems such a waste that only 50MB of the CD was actually used) and throwing it in my computer, it booted straight into a desktop that was only using 18MB of RAM. Even browsing the Net with Firefox I was still only using 33MB. DSL looked like it was going to fit into my tight RAM requirements quite well. I told it to install itself to my hard drive and then looked up a tutorial on how to dual boot Linux and Windows, having never done that before.

Inevitably, you will end up on the first link from Google for "dual boot Linux Windows" which is Ed's software guide on Linux. Unfortunately, Ed's guide seems a little out of date and not all that accurate. He suggests using fips for your partitioning needs but the link he supplies is no longer current. I found that burning a gparted live CD provided more than just the best tool money can buy for free. The particular CD I burned has Grub installed with about as many options for booting as you can imagine, so once you have started messing around with GRUB and can't boot your own system any more, you can still put in the gparted CD, load GRUB and tell it to boot "The first partition from the second disk" or wherever your favourite OS is. His instructions also seem convoluted and unnecessary. In the end he seems to be using the Windows boot loader to give the choice between loading Windows normally or loading GRUB which will then load Linux. My final result was a little different. I loaded GRUB from a 40MB boot partition and then gave GRUB the choice between Linux and Windows. The advantage of this way is that the process of getting it all working is actually less complicated than Ed's instructions. The most useful guide to GRUB (and hence dual booting) I found was GRUB from the ground up from Troubleshooters.

Now, for the list of gotchas and caveats. Firstly, I used gparted to shrink my Windows partition by 40MB and move it to the right so I could fit a 40MB partition in before it. The reason I did this is because of that famous 1024 cylinder limit. I don't know if my computer can boot from beyond the first 1024 cylinders but I didn't feel like taking the chance. Linux was going to be on a seperate physical drive anyway so I would need a boot loader on the first drive (The BIOS calls it the C: drive.) to load Linux from the second. This took about two hours for a 50% full 16GB drive. One for a read-only test and one for the real thing. Your mileage may vary. I also noticed some flags you could set for each partition in gparted but while reading Ed's guide I was a little reluctant to mess with them because he makes it sound like you'll need to reformat everything and re-install if you screw up your MBR. My computer persistently kept on booting into Windows until I changed the "boot" flag from the Windows partition to my brand new boot partition. It seems obvious now but it just goes to show how a little knowledge is a dangerous thing.

Once I had changed that "boot" flag, I was presented with the GRUB command line when my system started up. It looks scary but it's not really all that bad if you have used the Unix command line before. You can use it to interrogate your hard drives and search for files (such as the Linux kernel) or figure out what hard drives you have or, if you're feeling wild and rebellious, you could use it to boot into one of your available operating systems. Until I figured out the GRUB configuration file I was booting Linux by typing three commands at this boot prompt every time I restarted. The commands I typed are explained in detail on the Troubleshooters page I linked to earlier and are included in my sample GRUB menu file at the bottom. The first command tells GRUB where it can find the rest of itself. GRUB's first stage can only be 512 bytes and if it's doing anything more complicated than handing control over to Windows then it will need to load some more code. root (hd0,1) refers to the second partition (,1) of the first hard drive (hd0) and this is the location of GRUB's stage 2. The second command kernel /boot/linux24 root=/dev/hdb5 tells GRUB where to find the Linux kernel (kernel /boot/linux24) and where Linux should mount it's own root filesystem from when it loads (root=/dev/hdb5). If those two commands print confirmation messages rather than error messages then the third command is simply boot.

To make things a little easier, you can tell GRUB some of these commands in advance and group them together under convenient labels such as "Windows" and "Linux". If you are installing a new Linux kernel you can set up a new group called "Linux with new kernel" and give it a different kernel statement. There seems to be some confusion over whether these instructions should go in a file called grub.conf or a file called menu.lst. I found that grub.conf didn't work for me no matter where I put it but once I put the same contents into a menu.lst file, it worked straight away. Below is what I have in my menu.lst file.

timeout 10The location of this file and others needed by GRUB may cause some confusion. It is common to mount your boot partition under /boot on a Linux system however it is not strictly necessary to mount it at all after you have created it. In fact, you can create it without mounting it but I wouldn't recommend that until you are an expert. The confusion arises because when GRUB mounts your boot partition, it doesn't mount it as /boot. GRUB will mount it as / which means that any path you specify to GRUB that has /boot at it's start will not work. Some people suggest using a symlink in your boot partition that looks like this: (boot -> .) This symlink will allow the same path to work for GRUB when the partition is mounted at / as for Linux when the partition is mounted at /boot. Another option would be to install all of GRUB's files in /boot/boot/grub/ and symlink (grub -> boot/grub) to make Linux do the symlink chasing rather than GRUB. In either case, if GRUB can load then you can put your files (grub.conf or menu.lst) in the same place as GRUB's stage1 and stage2 files and feel safe in the knowledge that GRUB will find them.

default 1

title Linux

root (hd0,1)

kernel /linux24 root=/dev/hdb5

title Windows

rootnoverify (hd0,0)

chainloader +1

I hope my little story has made it easier for you to get your system dual booting or simply booting at all.

Distribution and layers

8pm, 2nd May 2007 - Geek, Interesting, Web, Security, Developer, Sysadmin Lately, I've noticed that the application of layers and distribution to a great many things seem to improve nearly every aspect of the thing in question. It may seem obvious when it's pointed out but for me, at the time, it was the application of a well known principle in computer science to areas outside computer science and the astonishment that the principle continued to work.

Lately, I've noticed that the application of layers and distribution to a great many things seem to improve nearly every aspect of the thing in question. It may seem obvious when it's pointed out but for me, at the time, it was the application of a well known principle in computer science to areas outside computer science and the astonishment that the principle continued to work.

I think I was first exposed to distribution when I discovered the distributed.net project. The idea was that sometimes a problem that would take one person an entire lifetime to solve can be solved by 1000 people in 1/1000th of a lifetime. When a problem is able to be split up like that (it is said to be parallelisable or distributable) then it makes sense to share the workload out over an appropriate number of people to be able to finish the job on time. In the case of distributed.net, a problem that was supposed to be unsolvable in any practical amount of time (decrypting an encrypted message) was actually solved in 22 hours!

Improving the time to solve a problem by distribution is not only applicable to large problems that can be split up into chunks but, not surprisingly, also to large numbers of small problems. Web servers are a very commonly used example. You may request a web page from a server and receive a response - your web page - yet when you make the same request later, it may be served by a completely different server. The magic behind the scenes is usually a load balancer in front of a number of web servers. The load balancer is designed to make sure each of the web servers is doing an appropriate amount of work and should be invisible to the end user.

There are several advantages of using a load balancer in front of many web servers rather than buying a faster, more expensive machine that can handle the same load all on it's own and the first one is price.A lot of almost nothing adds up to something.

A system is more reliable if it has no single point of failure. As a website grows it often starts with just one small web server but eventually this solitary web server won't be able to keep up and at this point it is much cheaper to buy a second small server with a load balancer than to buy a new server with twice the capacity and throw the old one out. Over all, you may have spent the same amount of money but the spending was small when your website was small and grew in proportion to your website. Other advantages can be even more compelling - one of my favourite benefits of distribution is reliability. If you have 10 web servers and one of them crashes, each of the other 9 will have to do 10% more work but your website will keep on working. The failure will not affect your visitors.

The two principles at work here (with the distributed.net project and the web server balancing) are that a lot of almost nothing adds up to something and that a system is more reliable if it has no single point of failure.

Layers are really just a specialised application of distribution where all of the elements are chained together and each element in the chain does a slightly different job. A job is only passed along to the next element in the chain if the current element of the chain can't complete it. Often, the chain is ordered so as to optimise it's own efficiency.

To continue with the example of the web servers, in order to speed up the response times of web servers, web masters use caching. Caching involves taking the result of a long, slow process and remembering that result. The next time somebody asks us for the result, we just hand them the copy we remembered (our cached copy) rather than calculating the result again. Caching usually improves access speed and reduces calculation time at the expense of using up more memory however as memory is often quite cheap, this trade-off is usually worthwhile. Caches usually have rules about how long they are allowed to remember a certain result so that they don't continue to remember a result that is incorrect or stale.

Caching is broadly applicable and can be implemented in many places within the system. The SQL server can cache results of certain queries so it doesn't have to calculate them again when the web server requests and the web server can cache it's copy results of the same queries so it doesn't even have to ask the SQL server for them. Sometimes, in front of the web server, there is another specialised web server called a proxy server which can also cache the page generated from the SQL queries by the web server so that it doesn't have to generate that page again. As Caching happens closer and closer to the source of the request, the advantage grows larger and larger. Sometimes your ISP will cache pages or parts of pages and not even ask the website to send it's copy to you but rather just send their own. Your own computer even caches pages and parts of pages you have requested so that if you request something a second time, it already has it and just gives it to you straight away. In this way, a request for a web page can be distributed over many different computers and the result is a much faster page on your screen. The other main advantage of distribution still applies here; if the SQL server crashes or the web server crashes, you may not even be aware of it because your entire page was served from a cache closer to you than either of those two servers.

Layering applies to more than just caching however. Spam filtering works quite well with many layers. Your ISP probably employs many layers of filters in order to prevent spam from reaching your inbox. Acme.com has a very good write-up on filtering spam using layers. One nice thing about these layers of filters is that you can use the results of later filters to modify earlier filters in order to reduce the total workload. Because the filters are applied in an optimised order, if a job is filtered at an earlier level it actually takes less work to achieve the same result than if it were filtered at a later level. This is still true even when you ignore the workload all of the filters in between that don't even filter the job. Caching can be seen as a series of filters that are filtering a request for a web page. As soon as one filter can resolve the request it does so and doesn't pass the request any further along the chain.

Another area where layering can create advantages is security. This is a principle often known as defence in depth. (Defence in depth also covers other areas but for the purposes of this discussion, it means layers.) In this case, the layer is usually not created in front of the existing layer, but behind it. For example, you might place a firewall at the external perimiter of your network to restrict access (layer 1) and then also place firewalls on each of your hosts within the network (layer 2). It may seem, if the firewalls were configured identically, that anything that made it through the first layer would also make it through the second layer but it is not so. If an attacker avoided the outer firewall by exploiting an unsecured wireless network set up by an employee within the building, then the firewalls on each host would be the only thing protecting the data contained within. Having said that, there is nothing that requires the firewalls to be identical. In fact, you should make each firewall as restrictive as possible (but not more so) on a case by case basis. Layering improves security because an attacker has to break each layer of security seperately and if any layer fails, the next likely will not.

So far, these examples have been all very computer related but thinking about them made me wonder if the principles involved might apply to areas not related to computers. It didn't take long for me to find some. Power generation is a good one. If every home has some alternative source of power generation - a wind turbine, solar panels, whatever they like - then the advantages of distribution take hold. The power station has reduced load and if a disaster strikes bringing the power lines down it affects fewer people and those not as badly. People can grow their own vegetables too. This means reduced load on farmers (and the land) and more reliability if the farmers are unable to deliver the vegetables for some reason.